Mads Damsbo & José Gonzales

Virtual Production, image

& video diffusion models

“A Perfect Storm” is Bacon Lab's first public experiment. We wanted to prompt and generate a complete cinematic universe to better understand our human responsibility in the age of AI. We found that it still demands a ton of human labor and decisionmaking to create something of value.

Here's what we tried, what we learned, and what surprised us.

We made a music video on an LED stage with a synthetic cast, a real musician, and no locations. Then we asked: did it work?

This film is a product of human creativity, enabled by artificial intelligence. Only one person was in front of a camera, and no locations were used.

Head of Lab Mads Damsbo and José González set out to use AI in full view. Not to disguise it, but to hold it up to the light.

The question was never can we do this. It was what happens when we do.

THE FILM

THE EXPERIMENT

Bacon Lab is a creative research lab exploring emergent technologies and production methods. Its projects aren't demos. They're designed to surface real questions about craft, impact, and what changes when the tools do.

"AI is evolving at incredible speeds and is ushering filmmakers into a new paradigm. We, at Bacon, are acutely aware of the ethical and moral implications of using artificial actors, but at the same time we feel it's absolutely necessary to explore the creative boundaries of this new medium and the actual impact it has on a real audience." – Mads Damsbo, Head of Lab

This is Bacon Lab's first public experiment. It won't be the last.

ABOUT BACON LAB

WHOSE BEHIND THIS?

Before anything was generated, five questions were put on the table.

Here's what happened when we tried to answer them.

THE HYPOTHESES

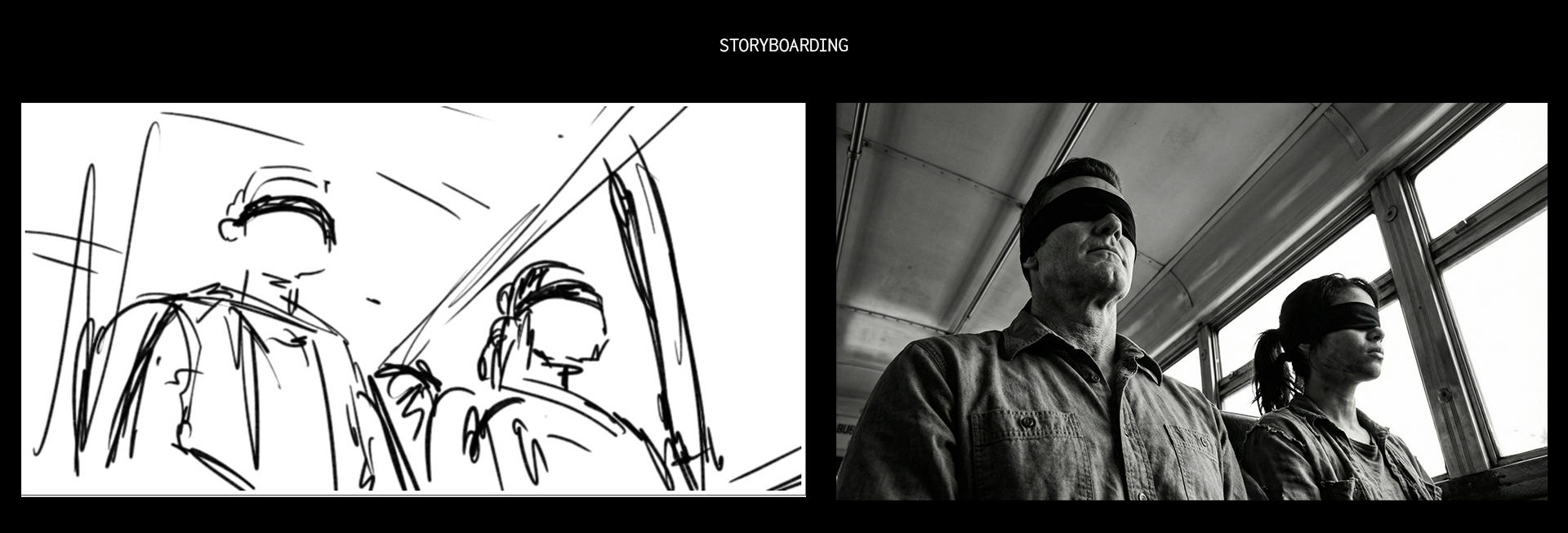

Can we make the film before we make the film?

HYPOTHESIS 1

Using storyboards fed into image and video models, we built a fully animated, photorealistic animatic of the entire video. A rough cut that looked almost like the real thing before a single shot was generated.

Learning: A well-drawn storyboard is non-negotiable. When you are hand framing decisions to the model, you get generic results. The specificity of what you want has to come from you.

Can we find a cinematic style that doesn't feel like AI?

HYPOTHESIS 2

We pulled analogue references, shot test footage on a virtual production stage, applied a LUT to establish a visual language, and trained with a 35mm Lora to push the image toward something worn-in and physical.

Learning: Old superspeed lenses and black-and-white coverage do a lot of the heavy lifting. They camouflage the tendencies of the models. Shooting references on set and including them in prompts keeps consistency across generations. Prompting for flat LOG-C4 colour space preserves detail in the blacks and whites. A Topaz decompression pass in post recovers dynamic range the model flattens.

Can we cast believable synthetic humans?

HYPOTHESIS 3

We built a library of reference images, people who don't exist, and let the models generate a cascade of characters. From that, we cast eight key roles. Then we did casting tapes: putting each character through direction to see how they responded.

Learning: You need a clear picture of who you're looking for before you start. And once you have a face, run a reverse image search. Every time. Synthetic characters can drift uncomfortably close to real people. That's not a grey area.

Also - character consistency is a real hassle. Image models hallucinate and video models alter microexpressions that help us recognize someone. We should have trained specific Lora´s for all synthetic actors.

Can we generate 8K 360° plates for a virtual production stage?

Filming on LED requires full-sphere equirectangular content at 8K. We needed plates that could hold up at scale, in motion. Nano Banana as the first frame and Kling 2.6 handled the equirectangular format well. Minimal stitching artefacts, tolerable lens distortion, one clean stitch line.

Learning: Creating a “sweet spot” plate - a 4K high-fidelity zone behind the camera's frustum, saved us. Outside this spot, generated plates show artefacts and softness, even after upscaling and decompression passes. The technology is close, but not there yet for wide-lens or high-movement shots.

HYPOTHESIS 4

Can a synthetic cast make you feel something?

HYPOTHESIS 5

We stripped the story down to its minimum. Moments of performance and chemistry that required as little acting as possible to land. Then we showed the film to audiences, some who knew it was synthetic and some who didn’t, and watched what happened.

Learning: Yes. Not unconditionally, not always, but real emotional response happens. Audiences felt something, especially (of course) for those that did not know they were fabricated. But surprisingly more often than not, for people that knew they were fabricated. That's the part we're still sitting with.

CONDUCT

HOW WE WORKED

Sticking to new principles of ethical use of AI.

Bacon Lab recently published its 6 C's — a code of conduct for the ethical use of AI in filmmaking. Every decision made on

A Perfect Storm was made inside that framework. All synthetic actors were reverse image searched for unintentional likeness. No third-party material was used for prompting.

Learning: It’s tempting to think that making this film was easy, it wasn’t. Because of the tight deadline, the velocity of generations of shots we could produce and the affordance of new models on a weekly basis, it was a real challenge to stick to the ethical framework. Are we sure this image isn't copyright protected? Does this actor resemble someone? Where is our data going when using this new model? Exceedingly more time was spent on answering these questions, than actually being creative.

→ Read the Bacon Code of Conduct

A CONVERSATION

⮑

'A Perfect Storm' - A Conversation between José González & Mads Damsbo

A discussion on process and the future of AI in the arts

If you want to dive deeper into the making of the film, then listen to Jose Gonzales and Mads Damsbo speak with journalist Christian von Essen about the process, the challenges, the surprising outcomes and how both look into the - bright or dark? - horizon ahead of us

Nano Banana Pro, Flux 2 with

HerbstPhoto 35mm Lora

Kling 2.6 (first/last frame),

Kling O3, Wan 2.5

Upscaling & decompression

All AI models were used in accordance with The Bacon Code of Conduct for AI.

TOOLS USED

THE STACK

.webp)

Directed by:

Mads Damsbo, Head of Bacon Lab

Executive producer:

Samuel Cantor

Head of communications:

Lasse Cato

Focus puller:

Peter Topsøe-Jensen

Props master:

Freja Søndergaard

Props assistant:

Mathias Deeli

VFX supervisor:

Jonas Drehn

Creative concept artist:

Jesper Dalgaard

Comp and AI artists:

Virgil Gervig Kastrup, Cristian Predut

Sound design & mix:

Ballad

Sound designer & mixer:

Aurelius Adrian

Additional sound design:

Oscar Metcalf-Rinaldo

Graphic designer:

Polly Makarenkova

BTS videographer & photographer:

Milan Bjørnild

BTS photographer:

Elza Duka

Supported by:

Nordisk Film LED Stage

VP supervisors:

Mads Brydegaard Buus, Rik Kobro Hals

VP artists:

Frederik Nygaard Sejrsen, Rasmus L. Møller

Head of VP:

David Williams

credits

.webp)